Renée DiResta testified before the Committee on House Administration Subcommittee on Elections in a hearing titled "A Growing Threat: Foreign and Domestic Sources of Disinformation" on July 27, 2022. DiResta is the Technical Research Manager of Stanford Internet Observatory (SIO) at Stanford University. The SIO is one of the collaborators involved in the Election Integrity Partnership (EIP) that was formed in 2020, about ten months before the Presidential election. At the heart of this endeavor is one of our most fundamental rights; the right to free speech as outlined by the First Amendment. Behind closed doors, our government is using people like DiResta and her collaborators to define the narrative, weaponizing speech in the name of national security.

The EIP is now famous for assembling a list of the top "repeaters" of mis- and/or disinformation offenders, including but not limited to actor James Woods, the Trump family, Rep. Marjorie Taylor Green, TN Congressional candidate Robby Starbuck and TPUSA's Charlie Kirk. The EIP also identified repeat offenders from various news outlets and groups who allegedly spread and/or amplified "false" information variety of social media platforms.

The EIP was formed to partner with the government to "defend our elections against those who seek to undermine them by exploiting weaknesses in the online information environment." DiResta and her colleagues are laser-focused on how public opinion is shaped by online information and interaction. Concerned about the threats to the "democratic process," their mission is to narrowly focus on topics like elections and even public health. The EIP final report fixates on issues that are "harmful" to democracy (wrong speak), namely "attempts to suppress voting, reduce participation, confuse voters, or delegitimize election results without evidence. We are interested in these dynamics both during the election cycle as well as after the election, when public perceptions of its legitimacy continue to be formed," according to the EIP homepage.

As a result of her collaboration on EIP, DiResta played a key role in setting online "mis- and disinformation" policies for the Biden administration's Office of Digital Strategy (ODS). The Director of ODS is Rob Flaherty, one of the top-ranking officials slated to be deposed in the important Missouri v. Biden lawsuit, a suppression of speech case.

Renée DiResta/http://www.reneediresta.com/

DiResta's testimony features several "key takeaways." The internet and social media platforms are key vectors for mis- and disinformation, causing rumors or "a false impression before facts are known." She says before the 2020 election, "misleading narratives" came from both the top and the bottom of the information food chain. Influential accounts posted information, and that information was amplified by "everyday people." Her solution for this scourge is a "whole of society approach" characterized by the identification of election-related rumors, analysis of their "capacity for harm," and to combat the harm with "up-to-date information" for "constituents in service to facilitating an informed electorate." DiResta's testimony below begins at the 31:55 timestamp.

Fauci Uses DiResta Tactic to Flood the System with Truth

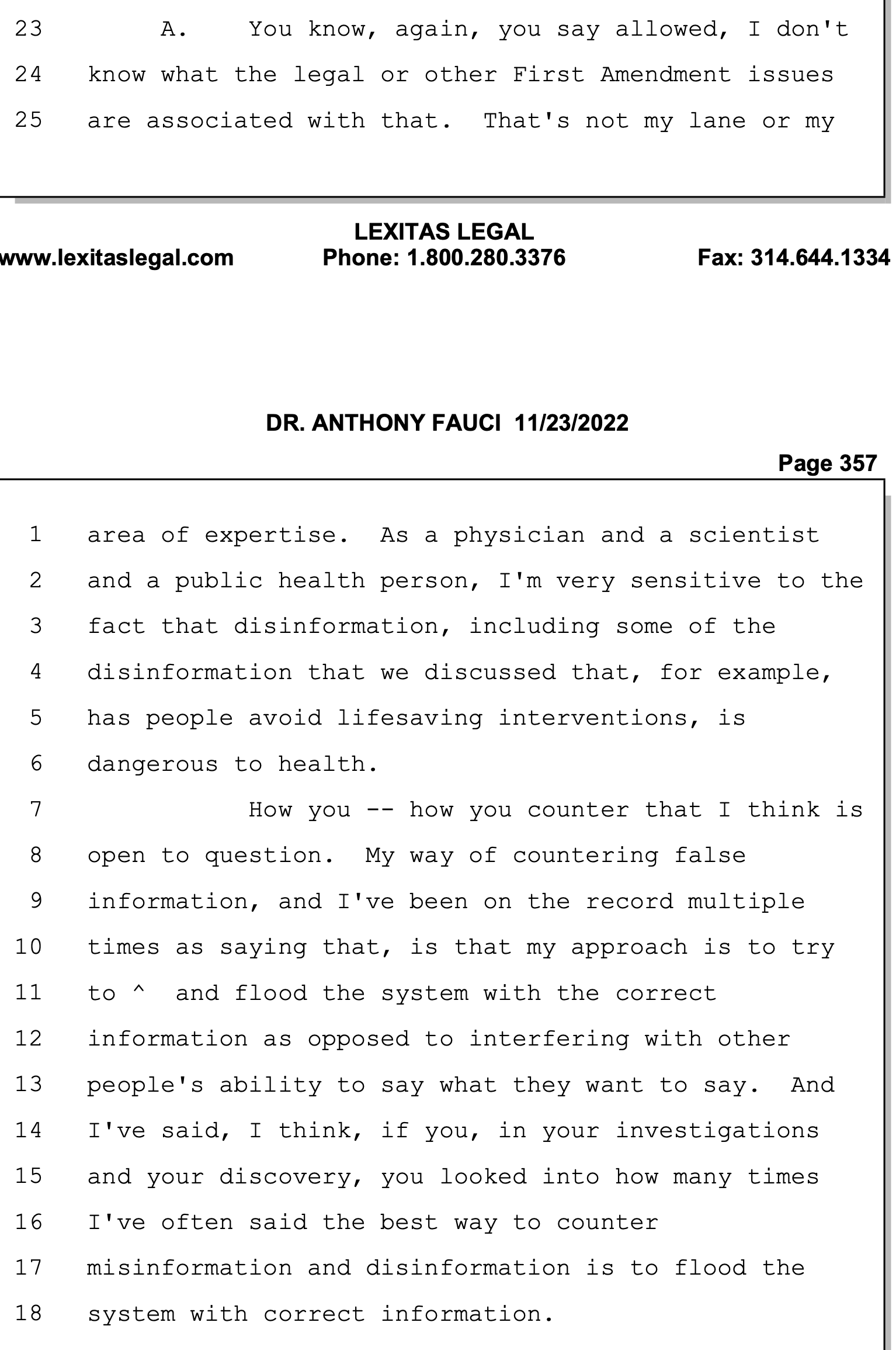

Notably, NIH Director Anthony Fauci's recently published November 23 videotaped deposition for Missouri v. Biden showed he used a similar tactic to combat mis- and disinformation on various topics related to the pandemic. While he repeatedly testified he "didn't put misinformation squarely on [his] radar screen" (p. 340) and he "doesn't pay attention to things related to social media accounts" (p. 301), Fauci says he relies on what he called "flooding the system with the correct information as opposed to interfering with other people's ability to say what they want to say." (Pp. 356-357) Whether he participated directly or not in the suppression of speech on social media, it is laughable to think this guy didn't realize his potential influence based on the size of his megaphone or have an awareness of the battle lines that were being drawn. Fauci's emails alone show he was aware of the various pandemic-related narratives that were circulating. He demonstrates a Clintonesque ability to "not recall" people he has met and issues he has discussed. Frankly, it is amazing he has been able to function in his important role at the NIH for so many years.

Anthony Fauci/Deposition November 23, 2022/Pp. 356, 357

Missouri Attorney General Eric Schmitt explains some of what is in the transcript of Fauci's deposition:

DiResta and Starbird: Twin Powers Activated

DiResta and Kate Starbird, who founded the Center for an Informed Public at Washington University, led the team of researchers who ultimately produced a 2021 final report entitled "The Long Fuse: Misinformation and the 2020 Election." The Atlantic Counsel's Digital Forensic Research Lab (DFRLab) and Graphika also partnered with DiResta and Starbird to investigate, for the Biden administration, "influence operations and the spread of narratives across social and media networks," according to her testimony in July. Much of the impetus for their final report was the "January 6 insurrection," which was "the culmination of months of online mis- and disinformation directed toward eroding American faith in the 2020 election."

EIP/List of Contributors/p. xii

One of the concepts the DFRLab explores is the "democratization of deception," which means that deception campaigns are no longer solely the purview of state actors but, instead, everyone is now a potential participant."

"A really interesting feature of the cyber revolution is the democratization of deception. The classic strategies of intelligence—espionage, subversion, disinformation, counterintelligence, and secret diplomacy—that were once practiced mainly by states are now within reach of many actors. The more interesting variation may be in capabilities—states can do more for many reasons—than in strategy. Like it or not, we are all actors, intermediaries, and targets of intelligence."

Graphika prides itself on being the "cartographers of the internet age." According to their homepage, "Graphika leverages the power of artificial intelligence to create the world's most detailed maps of social media landscapes. We pioneer new analytical methods and tools to help our partners navigate complex online networks."

"The Long Fuse" Report

The January 6 "insurrection" was the driving force behind the Long Fuse Report. One can surmise that these four collaborating organizations saw the information being spread on the internet about the 2020 elections (known as "The Big Lie" in their circles) as a "long fuse" or a long march toward what people like DiResta and Starbird perceive to be an inevitable catastrophic event. According to the report, they collaborated with "dozens of federal agencies [who] support this effort, including the Cybersecurity and Infrastructure Security Agency (CISA) within the Department of Homeland Security, the United States Election Assistance Commission (EAC), the FBI, the Department of Justice, and the Department of Defense." Their role as non-governmental entities, they write, is to fill in the gaps (arguably to launder) that the government is unable to fill because we are a constitutional republic, and such activity is the currency of tyrants. These organizations specialize in "information dynamics." The report elaborates:

"However, none of these federal agencies has a focus on, or authority regarding, election misinformation originating from domestic sources within the United States. This limited federal role reveals a critical gap for non-governmental entities to fill. Increasingly pervasive mis- and disinformation, both foreign and domestic, creates an urgent need for collaboration across government, civil society, media, and social media platforms."

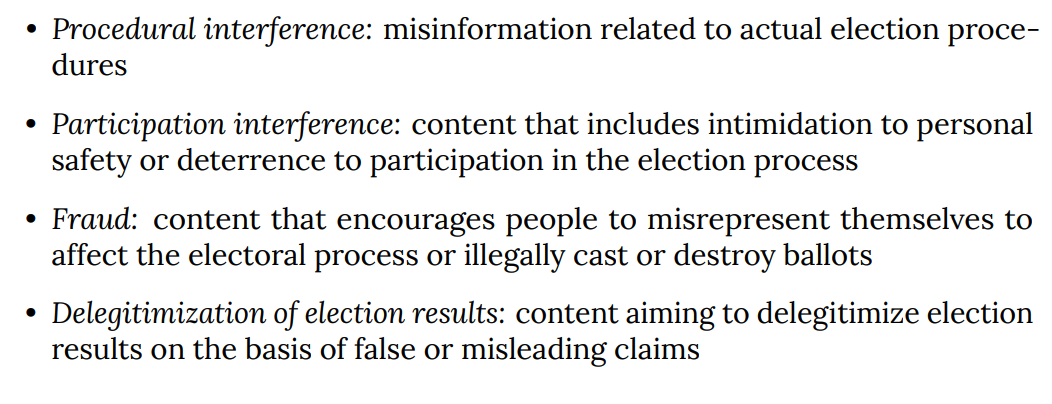

DiResta and her colleagues identified mis- and disinformation and "bad actors" that "enabled these narratives to propagate," "shared clear and accurate counter-messaging, and "built a framework to compare the policies of 15 social media platforms across four categories":

Long Fuse Collaboration/What We Did

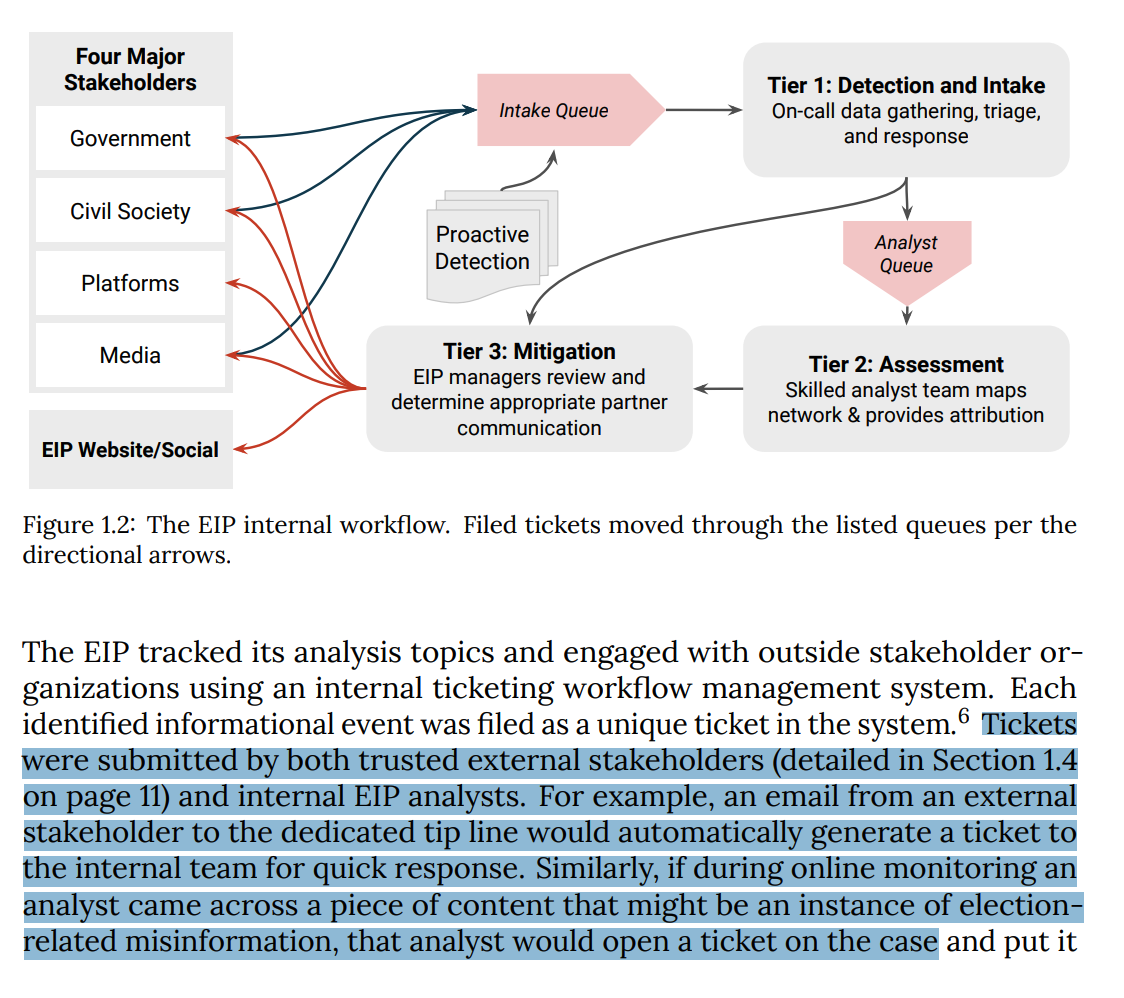

To conduct their research, they created "a tiered analysis model base on "tickets" collected internally and from our external stakeholders;" government, civil society, social media platforms, and media.

EIP/Stakeholders

They allegedly found that 72% of the tickets they process "were related to the delegitimization of the election." The process flow for ticket submission is illustrated below:

EIB/Ticket Process Flow

They uncovered what they called "warped" stories, meta-narratives of a stolen election, and "cohesive narratives of systemic election fraud" from "right-leaning blue-check influencers." These types of narratives, they wrote, resulted in the "production and spread of misinformation [that] was multidirectional and participatory." According to DiResta and Co., these viral rumors also "leveraged the specific features of each platform for maximum amplification." This activity ultimately led to "the January 6 rally at the White House and the insurrection at the Capitol."

Long Fuse/p.vii

The report's key recommendations for the federal government reinforce what many who have followed this MDM campaign have long suspected. The report emphasizes the need for our federal government to "establish clear authorities and roles for identifying and countering election-related mis- and disinformation" because of the threats of such information to our national security. The report emphasizes the need to work with CISA's Rumor Control and other agencies in the federal government to "create clear standards" for the disclosure of the information they want you to see as mis- and/or disinformation. There are also suggestions to codify bipartisan recommendations to the Senate Select Committee on Intelligence "related to the depoliticization of election security." Finally, "trusted channels," they write, "must be established for voters to include a .gov website and use of both traditional and social media."

It should be noted that baseline Operations like this one were finely tuned and quite successful during the pandemic—with Fauci, the CDC, and the media working hand-in-hand to flood the zone with the "correct" information, some of which may have cost people their lives.

DiResta has also participated in other panels on mis- and disinformation. One of her most notable appearances was associated with Prince Harry's Archewell Foundation. The Duke of Sussex and his wife, Meghan, rail against misinformation on the internet because of their personal experiences with it. The Duke says, "he lost his mother to this self-manufactured rabidness, and obviously, I'm determined not to lose the mother of my children to the same thing." DiResta appeared on a panel sponsored by Archewell, and WIRED called the "Internet Lie Machine" in November 2021. She was joined by many other cyber experts, including the new director of the US Cybersecurity & Infrastructure Security Agency, Jen Easterly. Easterly joined the WIRED panel to "talk about hacking and misinformation on a global scale." She wants to engage "good" hackers to identify threats to our "national critical infrastructure security, in particular election infrastructure."

DiResta most recently published a column for noemamag.com on how "online mobs" behave like flocks of birds in nature, as seen in the video captured below:

Tellingly, one of her missions is to help "examine the structure" of misinformation, disinformation, and propaganda and "rework" the structure. She writes, "the fight over content moderation (and regulatory remedies like revising Section 230) makes us miss opportunities to examine the structure — and, in turn, to address the polarization, factional behavior, and harmful dynamics that it sows." She then muses: "So what would a structural reworking entail? How many birds should we see? Which birds? When?"