Anyone who understands the power of social media and its influencers understands the power of content amplification. Content amplification is one way information goes viral. It is sometimes the way certain information dominates the internet.

The end result of Twitter's new pilot platform roll-out, called Birdwatch, may well be the latest way to help amplify information that should be censored in their estimation. Given recent statements and actions, it is difficult to think about it differently. However, reading through the supporting documents shows that the company is at least attempting to avoid bias and target divergent viewpoints from the ones held by its leadership.

Graphic/Twitter Birdwatch Program

Graphic/Twitter Birdwatch Program

Twitter has selected an initial army of 1000 "Birdwatchers" who have been enlisted to identify and comment on misinformation in tweets. Twitter's Birdwatch Overview Page says,

"Birdwatch is a community-driven approach to address misinformation on Twitter. Participants can identify Tweets they believe are misleading, write notes that provide context to the Tweet, and rate the quality of other participants’ notes. Through consensus from a broad and diverse set of contributors, our eventual goal is that community-written notes will be visible directly on Tweets, available to everyone on Twitter."

In the current "phase" of the program, the Birdwatch site is separate,

"During this phase, Birdwatch contributions will not affect the way people see Tweets or our system recommendations. Our priority is to understand how to build and adopt a community-based approach that takes input from a diverse set of contributors and identifies the context that people will find most helpful...Eventually, we aim to make notes visible directly on Tweets for the global Twitter audience when there is consensus from a broad and diverse set of contributors."

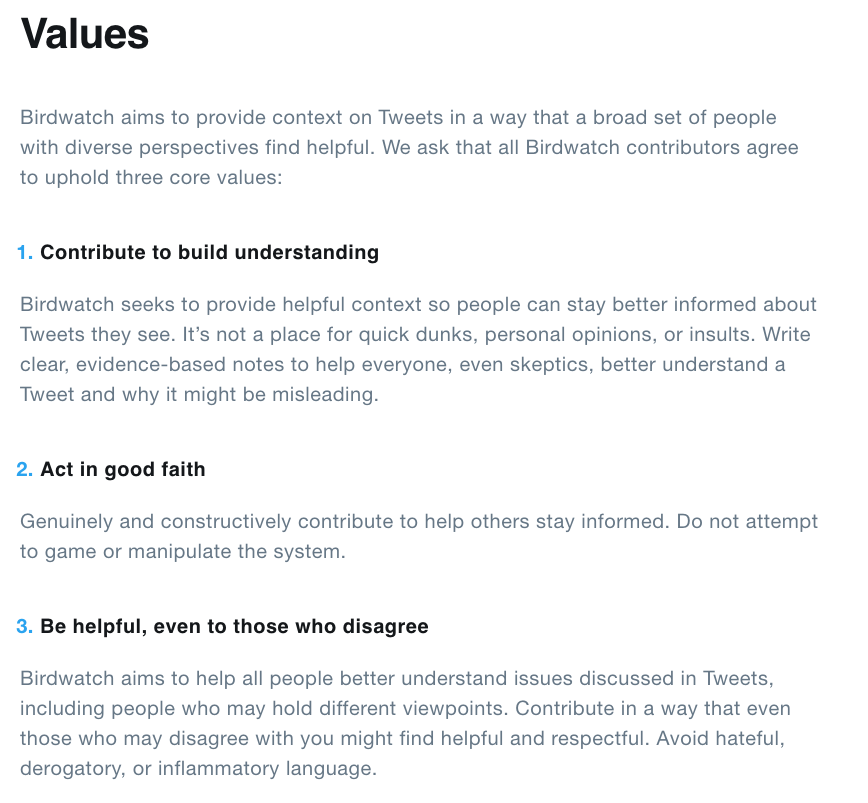

The "values" page seems to promote balanced contributions when composing notes for tweets. The goal here is to "provide context on Tweets in a way that a broad set of people with diverse perspectives find helpful." The guidelines call for evidence-based notes, good faith-submissions, respectful behavior, and reject name-calling and "hateful, derogatory, or inflammatory language—all seemingly reasonable and fair guidelines.

Graphic/Twitter Birdwatch Program Values

Graphic/Twitter Birdwatch Program Values

Birdwatch has a rating/ranking system in the current phase for the notes that its contributors submit on tweets. The rating system is "basic" for now but, regardless, it looks as if Twitter will build algorithms based on its database of human notes and ratings of those notes.

Twitter is also working with outside organizations to develop the Birdwatch Program. A member of the University of Chicago's RISC program is "embedded" on the team. The RISC program studies and promotes programs like criminal justice reform, partners with global NGOs on issues like food security and schools, and promotes climate change policies and "environmental protection," among other initiatives. While these can be noble pursuits, they are pursuits that tend to be prioritized by certain groups of people.

The Birdwatch Program has also been built through "hosting feedback sessions with reporters and researchers, [we’re] integrating social science and academic perspectives like behavioral economics, social psychology, political science and procedural justice into our product development process."

One section on the FAQ's page on the Birdwatch site entitled, "How will attempts to abuse or manipulate Birdwatch be prevented?" discusses the company's stated intention to include "Birdwatchers" in their ranks who represent a "wide range of people with diverse views. This obviously won’t be true if it can be taken over by a single group or ideology, or used in an abusive manner," the document explains.

The program will also try to minimize coordinated manipulation that is often seen in online communities. The site states that it will put in place mechanisms that are "resistant to manipulation attempts to ensuring it isn’t dominated by a simple majority or bias[ed] based on its distribution of contributors." During the development process, the people involved held feedback sessions with Twitter customers and "subject area experts" to better inform themselves about the identifying and controlling manipulation of the platform. The Challenges Page states the following:

"We are also focused on increasing the diversity of participants in our initial pilot phase. If we have more applicants than pilot slots, we will randomly admit accounts, prioritizing accounts that tend to follow and engage with different audiences and content than those of existing participants, so as to reduce the likelihood that participants would be predominantly from one ideology, background, or interest space. We will do the same for new sets of participants admitted after the initial pilot phase."

Importantly, under the Rules Section of the "Signing Up" page of the document, Twitter states the following:

"Contributions are also subject to Twitter Rules, Terms of Service, and Privacy Policy. Failure to abide by the rules can result in removal from the Birdwatch pilot, and/or other remediations. Anyone can report notes they believe aren’t in accordance with those rules by clicking or tapping the ••• menu on a note, and then selecting “Report”, or by using this form."

This is important because, in recent weeks and months, there has been what appears to be a significant bias against conservative accounts on the platform, resulting in their removal. In some cases, users followed those very rules and terms that resulted in the deletion of certain account holders and their tweets.

Many important independent influencers and leaders including, UncoverDC's Tracy Beanz, President Trump, and General Flynn's lawyer, Sidney Powell, have been completely erased from the platform without warning or recourse. If the Twitter organization truly wishes to ensure it and isn't "dominated by a simple majority or bias based on its distribution of contributors," it certainly has made it difficult to trust their intentions when it has been demonstrably biased in its actions.

Graphic/FactBias/EssentiaAnalytics.com

Graphic/FactBias/EssentiaAnalytics.com

Bias and its weaponization in the form of censorship on the internet is a complex subject. Reams of studies, books, and articles study the subject of bias. The language of bias is an even deeper discussion. The language we use to categorize, describe, relate, communicate is highly subjective. The ways one culture or group might communicate may deeply offend another. Algorithms are a fundamental building block of interaction on the internet of things. There are whole fields of study dedicated to removing bias from algorithms. Our only defense against censorship is to raise our awareness of how things work, who is planning what, and staunchly uphold, where possible, the Constitutional rights we have been given.

Two things seem to be true. One is that Twitter has already shown a propensity to target certain users and audiences. And, two, whether we like it or not, we all suffer from bias no matter how objective we might pretend to be. Let's hope that they truly intend to guard against shutting down the fullness of our American dialogue. Our country depends on it.